Anthropic CEO Dario Amodei released a statement following the company’s discussions with the Department of War on the use of its AI technology.

“I believe deeply in the existential importance of using AI to defend the United States and other democracies, and to defeat our autocratic adversaries. Anthropic has therefore worked proactively to deploy our models to the Department of War and the intelligence community. We were the first frontier AI company to deploy our models in the US government’s classified networks, the first to deploy them at the National Laboratories, and the first to provide custom models for national security customers,” Amodei said.

“Claude is extensively deployed across the Department of War and other national security agencies for mission-critical applications, such as intelligence analysis, modeling and simulation, operational planning, cyber operations, and more,” he continued.

“Anthropic has also acted to defend America’s lead in AI, even when it is against the company’s short-term interest. We chose to forgo several hundred million dollars in revenue to cut off the use of Claude by firms linked to the Chinese Communist Party (some of whom have been designated by the Department of War as Chinese Military Companies), shut down CCP-sponsored cyberattacks that attempted to abuse Claude, and have advocated for strong export controls on chips to ensure a democratic advantage,” he added.

“Anthropic understands that the Department of War, not private companies, makes military decisions. We have never raised objections to particular military operations nor attempted to limit use of our technology in an ad hoc manner. However, in a narrow set of cases, we believe AI can undermine, rather than defend, democratic values. Some uses are also simply outside the bounds of what today’s technology can safely and reliably do. Two such use cases have never been included in our contracts with the Department of War, and we believe they should not be included now,” Amodei noted.

A statement from Anthropic CEO, Dario Amodei, on our discussions with the Department of War.https://t.co/rM77LJejuk

— Anthropic (@AnthropicAI) February 26, 2026

More from the statement below:

- Mass domestic surveillance. We support the use of AI for lawful foreign intelligence and counterintelligence missions. But using these systems for mass domestic surveillance is incompatible with democratic values. AI-driven mass surveillance presents serious, novel risks to our fundamental liberties. To the extent that such surveillance is currently legal, this is only because the law has not yet caught up with the rapidly growing capabilities of AI. For example, under current law, the government can purchase detailed records of Americans’ movements, web browsing, and associations from public sources without obtaining a warrant, a practice the Intelligence Community has acknowledged raises privacy concerns and that has generated bipartisan opposition in Congress. Powerful AI makes it possible to assemble this scattered, individually innocuous data into a comprehensive picture of any person’s life—automatically and at massive scale.

- Fully autonomous weapons. Partially autonomous weapons, like those used today in Ukraine, are vital to the defense of democracy. Even fully autonomous weapons (those that take humans out of the loop entirely and automate selecting and engaging targets) may prove critical for our national defense. But today, frontier AI systems are simply not reliable enough to power fully autonomous weapons. We will not knowingly provide a product that puts America’s warfighters and civilians at risk. We have offered to work directly with the Department of War on R&D to improve the reliability of these systems, but they have not accepted this offer. In addition, without proper oversight, fully autonomous weapons cannot be relied upon to exercise the critical judgment that our highly trained, professional troops exhibit every day. They need to be deployed with proper guardrails, which don’t exist today.

To our knowledge, these two exceptions have not been a barrier to accelerating the adoption and use of our models within our armed forces to date.

The Department of War has stated they will only contract with AI companies who accede to “any lawful use” and remove safeguards in the cases mentioned above. They have threatened to remove us from their systems if we maintain these safeguards; they have also threatened to designate us a “supply chain risk”—a label reserved for US adversaries, never before applied to an American company—and to invoke the Defense Production Act to force the safeguards’ removal. These latter two threats are inherently contradictory: one labels us a security risk; the other labels Claude as essential to national security.

Regardless, these threats do not change our position: we cannot in good conscience accede to their request.

It is the Department’s prerogative to select contractors most aligned with their vision. But given the substantial value that Anthropic’s technology provides to our armed forces, we hope they reconsider. Our strong preference is to continue to serve the Department and our warfighters—with our two requested safeguards in place. Should the Department choose to offboard Anthropic, we will work to enable a smooth transition to another provider, avoiding any disruption to ongoing military planning, operations, or other critical missions. Our models will be available on the expansive terms we have proposed for as long as required.

We remain ready to continue our work to support the national security of the United States.

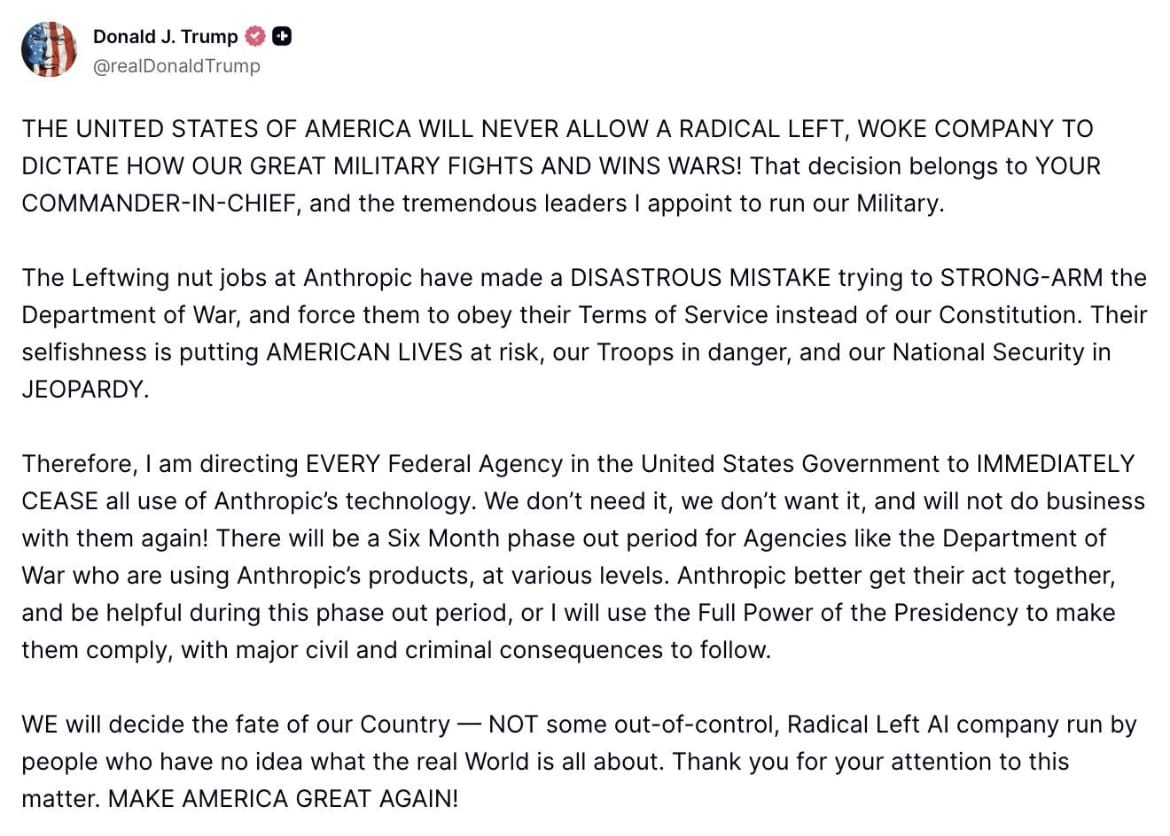

The lengthy statement follows President Trump instructing all federal agencies to “IMMEDIATELY CEASE” the use of Anthropic’s technology.

“THE UNITED STATES OF AMERICA WILL NEVER ALLOW A RADICAL LEFT, WOKE COMPANY TO DICTATE HOW OUR GREAT MILITARY FIGHTS AND WINS WARS! That decision belongs to YOUR COMMANDER-IN-CHIEF, and the tremendous leaders I appoint to run our Military,” Trump said on Truth Social.

“The Leftwing nut jobs at Anthropic have made a DISASTROUS MISTAKE trying to STRONG-ARM the Department of War, and force them to obey their Terms of Service instead of our Constitution. Their selfishness is putting AMERICAN LIVES at risk, our Troops in danger, and our National Security in JEOPARDY,” Trump continued.

“Therefore, I am directing EVERY Federal Agency in the United States Government to IMMEDIATELY CEASE all use of Anthropic’s technology. We don’t need it, we don’t want it, and will not do business with them again! There will be a Six Month phase out period for Agencies like the Department of War who are using Anthropic’s products, at various levels. Anthropic better get their act together, and be helpful during this phase out period, or I will use the Full Power of the Presidency to make them comply, with major civil and criminal consequences to follow. WE will decide the fate of our Country — NOT some out-of-control, Radical Left AI company run by people who have no idea what the real World is all about,” he added.

Full post below:

“This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon. Our position has never wavered and will never waver: the Department of War must have full, unrestricted access to Anthropic’s models for every LAWFUL purpose in defense of the Republic,” Secretary of War Pete Hegseth said.

“Instead, @AnthropicAI and its CEO @DarioAmodei, have chosen duplicity. Cloaked in the sanctimonious rhetoric of ‘effective altruism,’ they have attempted to strong-arm the United States military into submission – a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives. The Terms of Service of Anthropic’s defective altruism will never outweigh the safety, the readiness, or the lives of American troops on the battlefield. Their true objective is unmistakable: to seize veto power over the operational decisions of the United States military. That is unacceptable. As President Trump stated on Truth Social, the Commander-in-Chief and the American people alone will determine the destiny of our armed forces, not unelected tech executives,” he continued.

“Anthropic’s stance is fundamentally incompatible with American principles. Their relationship with the United States Armed Forces and the Federal Government has therefore been permanently altered. In conjunction with the President’s directive for the Federal Government to cease all use of Anthropic’s technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service. America’s warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final,” Hegseth added.

This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon.

— Secretary of War Pete Hegseth (@SecWar) February 27, 2026

Our position has never wavered and will never waver: the Department of War must have full, unrestricted…

CNBC shared further:

But Anthropic is simultaneously facing intense pressure to justify its massive $380 billion valuation, supported by large institutional and strategic investors, while it races to stay on the cutting edge of model development and fend off competition from OpenAI and other rivals including Google and Elon Musk’s xAI. All three of those companies’ models are used by the Defense Department.

Caving to the DoD’s demands could damage Anthropic’s reputation and alienate employees and customers. But if Anthropic refuses to agree to give the military unfettered access to its models, it could lose out on meaningful revenue in the short term and be shut out of potential future opportunities with other companies that do business with the government.

“There are no winners in this,” Lauren Kahn, a senior research analyst at Georgetown’s Center for Security and Emerging Technology, told CNBC in an interview. “It leaves a sour taste in everyone’s mouth.”

Sean Parnell, the chief Pentagon spokesperson, said Thursday that the DoD has “no interest” in using AI for fully autonomous weapons or to conduct mass surveillance of Americans, which he noted is illegal. He said the agency wants Anthropic to agree to allow its models to be used for “all lawful purposes.”

“This is a simple, common-sense request that will prevent Anthropic from jeopardizing critical military operations and potentially putting our warfighters at risk,” Parnell wrote in a post on X on Thursday. “We will not let ANY company dictate the terms regarding how we make operational decisions.”

In a separate post on Thursday, Emil Michael, the undersecretary of defense for research and engineering and a former Uber executive, wrote that Amodei is a “liar and has a God-complex.” He accused Amodei of wanting “nothing more than to try to personally control the U.S. Military.”

Hegseth set Anthropic’s Friday deadline during a meeting with Amodei earlier this week, and warned that punishment for not agreeing could be severe. He said Anthropic could be labeled a “supply chain risk,” a designation that’s typically reserved for companies from countries viewed as adversaries. The label would force DoD vendors and contractors to certify that they don’t use Anthropic’s models.

Amodei said his company won’t be intimidated.

CBS News interviewed Amodei following the clash with the Pentagon:

What’s your take?